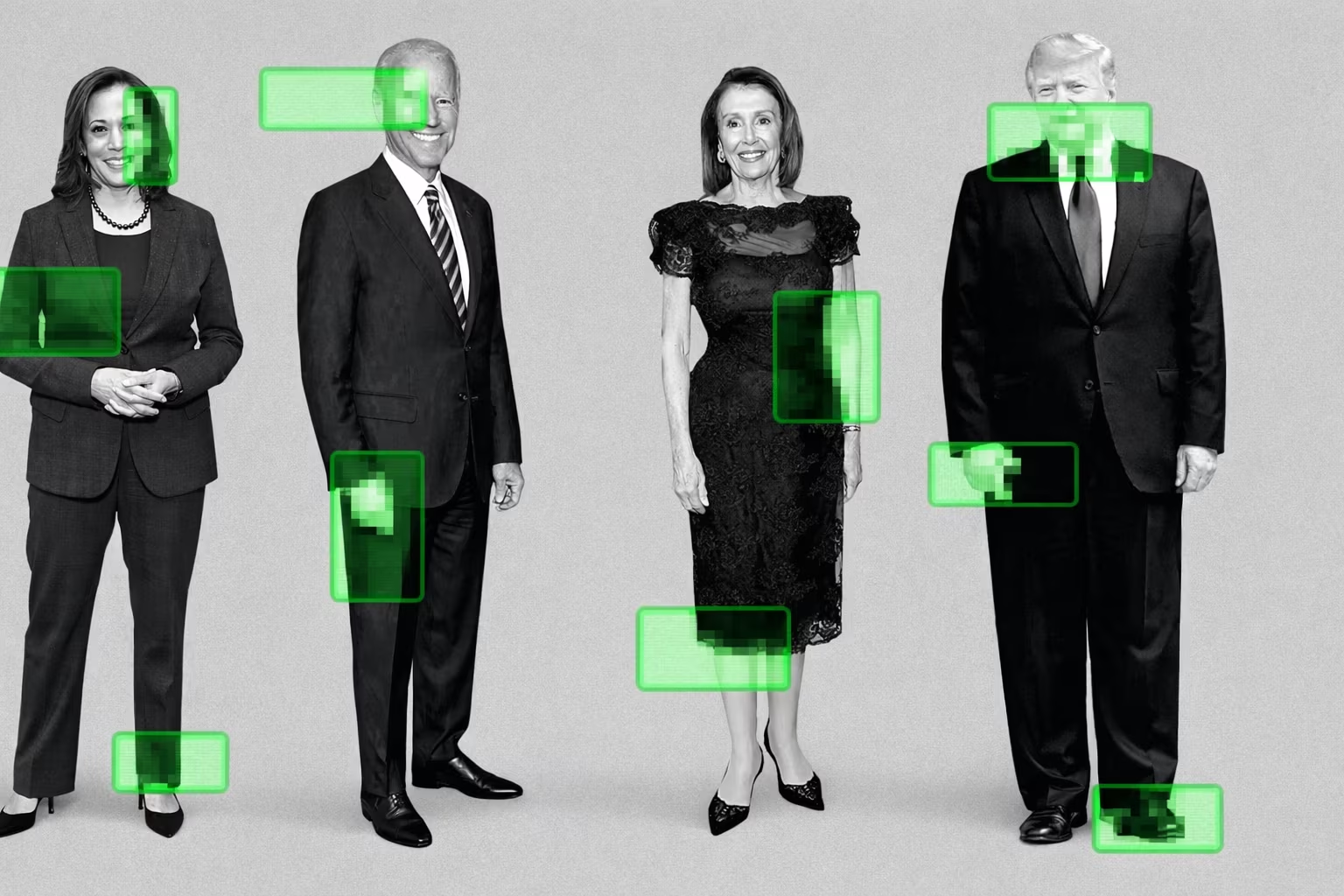

YouTube is expanding the use of a tool designed to detect impersonation, extending it to a limited group of government officials, political candidates, and journalists.

The significance of this initiative lies in the rapid development of new artificial intelligence systems, which are exacerbating the deepfake problem and making it easier to produce convincing videos featuring real people—including President Donald Trump. According to YouTube’s vice president for government affairs and public policy, Leslie Miller, “this expansion primarily concerns the integrity of public discourse.” She emphasized that “we recognize that the risks of AI-driven impersonation are particularly high for people working in the civic and political sphere.”

The likeness detection technology developed by YouTube analyzes videos uploaded to the platform and searches for signs that a specific person’s appearance—above all their face—has been used. If the system finds a match, the individual concerned can review the flagged video and submit a request for its removal through the platform’s privacy complaint process.

However, such a request does not automatically lead to the removal of a video: parody and satire remain permitted on the platform. YouTube declined to disclose who exactly is participating in the current pilot program, and did not confirm whether access has been granted to President Trump. Participation requires identity verification—by submitting a government-issued ID and a video selfie.

The initiative is part of a broader trend—technology companies are introducing additional safeguards against AI abuse and identity spoofing. YouTube’s chief executive, Neal Mohan, has previously said that one of the company’s key priorities for 2026 will be greater transparency around the use of artificial intelligence and stronger protective measures, including labeling AI-generated content and removing harmful synthetic material.

The development of the likeness detection tool began in 2024 in partnership with the Creative Artists Agency. At the time, the system was tested with well-known creators on the platform, including MrBeast and Marques Brownlee. Last year, access to the tool was expanded to all content creators.

At the same time, the company notes that over the past year users who were granted access to the tool have submitted a relatively small number of requests to remove videos. YouTube’s vice president for creator products, Amjad Hanif, explained that “in most cases such content turns out to be fairly harmless or even complements their core business.”

In the future, YouTube plans to open access to the system to all government officials, political candidates, and journalists. For now, the tool is focused exclusively on facial recognition, though the company is already exploring the possibility of detecting voice impersonation.

In addition, YouTube is considering an option under which users could earn revenue from the use of their likeness in detected content—similar to the Content ID system.

Deepfakes and AI-driven impersonation remain a source of concern for Congress and the Trump administration. Last year, Trump signed the TAKE IT DOWN Act, a law aimed at combating the distribution of intimate images without consent, including those created with deepfake technology.

However, broader legislation addressing deepfakes or requiring content labeling during election periods will be far more difficult to pass through Congress and is unlikely to emerge before the midterm elections.

One bill to watch remains the federal NO FAKES Act, supported by YouTube. It would require platforms to respond promptly to requests for the removal of content using AI-generated likenesses. According to Miller, “this law could become an important foundation—both in the United States and around the world—for ensuring that technology serves human creativity, rather than the other way around.”