The pace at which Nvidia’s chips are advancing is so rapid that it is difficult to compare with any historical precedent.

This matters because without such progress—which is drawing increasing attention as artificial intelligence reshapes society—physical constraints would quickly slow the expansion of data centers.

Nvidia’s chief executive, Jensen Huang, said on Monday that the company expects to generate “no less than” $1 trillion in revenue from its latest chips by 2027.

A month ago, Nvidia reported record sales and profits, driven by a sharp surge in orders from major technology companies building out data-center infrastructure.

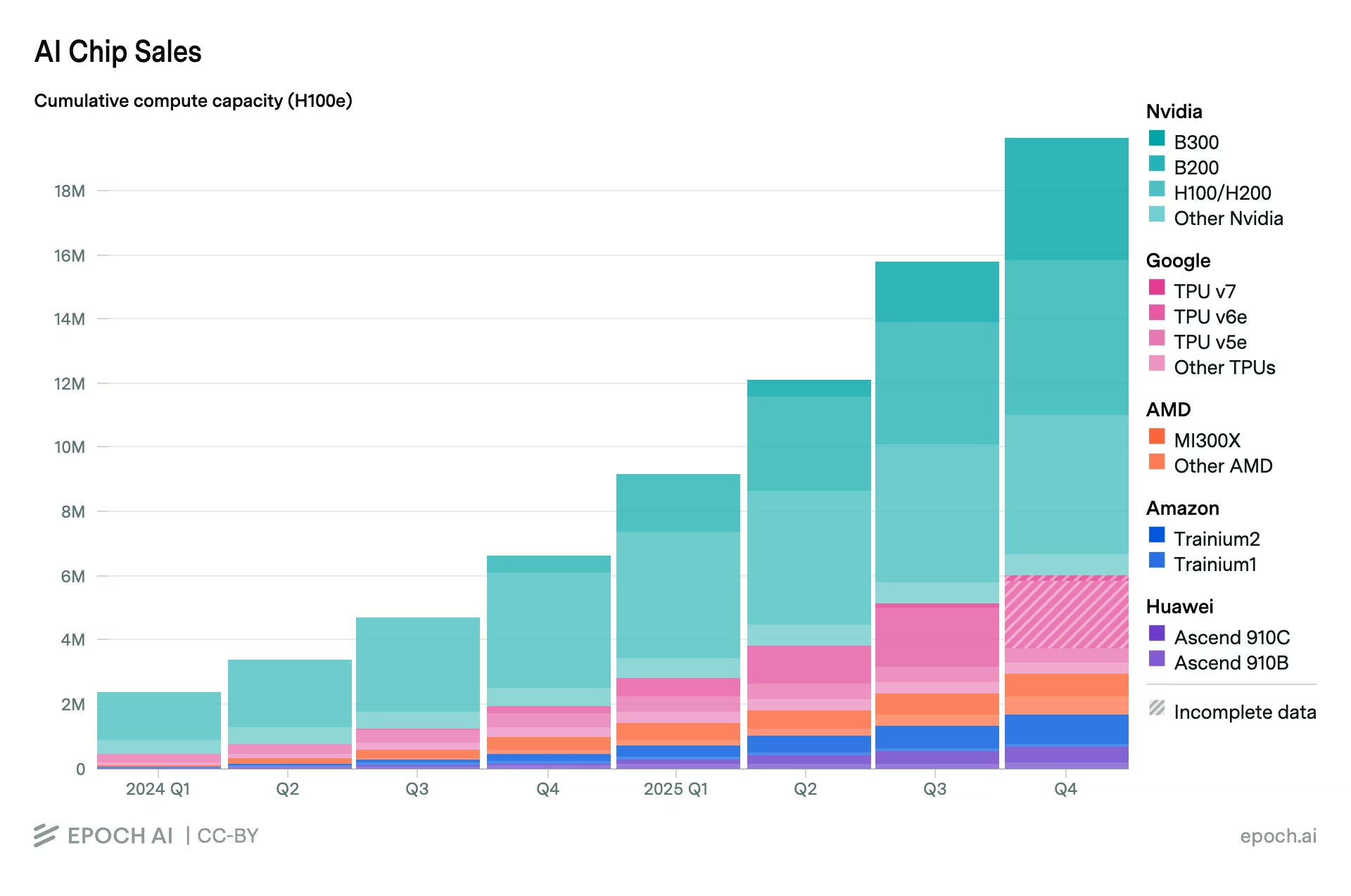

Historically, Nvidia effectively controlled the entire market. However, its overall share has declined—from 100% in the first quarter of 2022 to 65% in the fourth quarter of last year, according to the research and consulting firm SemiAnalysis.

Sales of Artificial Intelligence Chips

Artificial intelligence runs on electricity—and Nvidia’s chips determine how efficiently that resource is used.

The chips themselves—flat components roughly the size of a postage stamp—remain the core element of any data-center infrastructure. Each new generation delivers a sharp increase in performance per chip—even as overall demand for energy from AI continues to surge. “Chips are being redesigned from the ground up, because efficiency determines how quickly intelligence can scale,” Jensen Huang wrote in a rare blog post last week. “Energy is becoming the central constraint, as it sets the upper limit on how much intelligence can be produced at all.”

However, Nvidia faces a new risk as the industry shifts from training models to deploying them—inference. The architecture of its chips is more heavily optimized for the former. “This entire inference story represents a major threat to Jensen, because everything here revolves around efficiency,” said venture investor and MIT Initiative on the Digital Economy fellow Paul Kedrosky in a comment to the WSJ. “He is desperately trying to find a way to extend his dominance into inference as well.”

Energy efficiency has long been a mundane, if important, aspect of technological progress. It was typically associated with low-energy household appliances or cars like the Toyota Prius—saving money while reducing environmental impact. In the age of AI, however, it has ceased to be an added advantage and has become a necessity.

Electricity is physically constrained, whereas demand for AI-driven computation appears to have no ceiling. In such conditions, energy efficiency becomes the foundation of the rapid expansion of computing power.

The pace of change is comparable to moving from the Ford Model T to a Tesla in less than a decade—instead of more than a century. According to Josh Parker, Nvidia’s head of sustainability, if automotive fuel efficiency improved as quickly as chips are advancing, “we could drive to the Moon and back on a single gallon of fuel.”

As far back as 1865, the British economist William Stanley Jevons observed that improvements in the efficiency of coal-fired steam engines in England led not to a reduction, but to an increase in coal consumption. This phenomenon became known as the Jevons paradox—a situation in which gains in energy efficiency, contrary to expectations, stimulate additional demand for energy.

Artificial intelligence is amplifying this effect many times over. “The absolute footprint of AI in terms of energy consumption continues to grow year after year, and we expect this trend to persist,” Parker noted.

Chip efficiency is determined, among other factors, by their power consumption and cooling methods. Physics is inescapable—all electrical energy consumed ultimately turns into heat that must be dissipated.

Cooling systems fall into two main categories. Traditional air-cooled data centers often rely on evaporative technologies, which require substantial volumes of water. More advanced liquid-cooling systems can reduce water usage, although overall metrics depend on specific architecture and location. “Chips and servers are useless if you don’t have power and cooling,” said Rich Whitmore, who leads liquid-cooling efforts at Motivair, a Schneider Electric division that works with Nvidia.

Each generation of Nvidia chips—named after renowned scientists—has delivered a substantial leap in efficiency. The latest architecture, Blackwell, fundamentally rethinks the approach to computing, providing higher performance with improved energy efficiency, explained Dion Harris, Nvidia’s senior director of AI infrastructure.

If over the past decade the industry has moved from the Model T to Tesla, envisioning the next stage in the coming years becomes almost impossible. “Perhaps it will be some kind of hovercraft,” Parker said—with a touch of humor.